How Graphify and LLM Knowledge Bases are Killing RAG

How Graphify and LLM Knowledge Bases are Reshaping MarTech

In the fast-paced world of marketing technology and artificial intelligence, innovation moves at lightning speed. A recent buzz on X highlighted a groundbreaking development that perfectly encapsulates this rapid evolution: the emergence of Graphify, a tool that brings Andrej Karpathy's visionary LLM Knowledge Bases workflow to life.

The Challenge with Traditional RAG

For years, Retrieval-Augmented Generation (RAG) has been the dominant paradigm for enabling Large Language Models (LLMs) to access and utilize proprietary data. While effective, RAG often involves complex setups with vector databases, chunking documents into arbitrary segments, and performing similarity searches. This approach, though powerful, can sometimes feel like a 'black box,' making it difficult to trace an LLM's reasoning back to its original source data .

Karpathy's Vision: A Self-Healing Knowledge Base

Andrej Karpathy, a renowned AI researcher, proposed a more elegant and transparent solution: an LLM Knowledge Base architecture that bypasses the complexities of traditional RAG for mid-sized datasets. His vision involves treating the LLM itself as a 'research librarian' that actively compiles, lints, and interlinks Markdown (.md) files. Markdown, being LLM-friendly and compact, becomes the 'source of truth' .

This architecture operates in three key stages:

Data Ingest: Raw materials-such as research papers, code repositories, datasets, and web articles-are collected into a raw/ directory. Karpathy emphasizes converting web content into Markdown files, ensuring even images are stored locally for LLM vision capabilities.

The Compilation Step: This is where the magic happens. The LLM doesn't just index files; it 'compiles' them. It reads the raw data and constructs a structured wiki, generating summaries, identifying key concepts, creating encyclopedia-style articles, and, critically, establishing backlinks between related ideas.

Active Maintenance (Linting): The knowledge base is dynamic. The LLM continuously performs 'health checks' or 'linting' passes, scanning the wiki for inconsistencies, missing information, or new connections. This creates a self-healing, auditable, and human-readable knowledge system .

Graphify: Bringing the Vision to Life

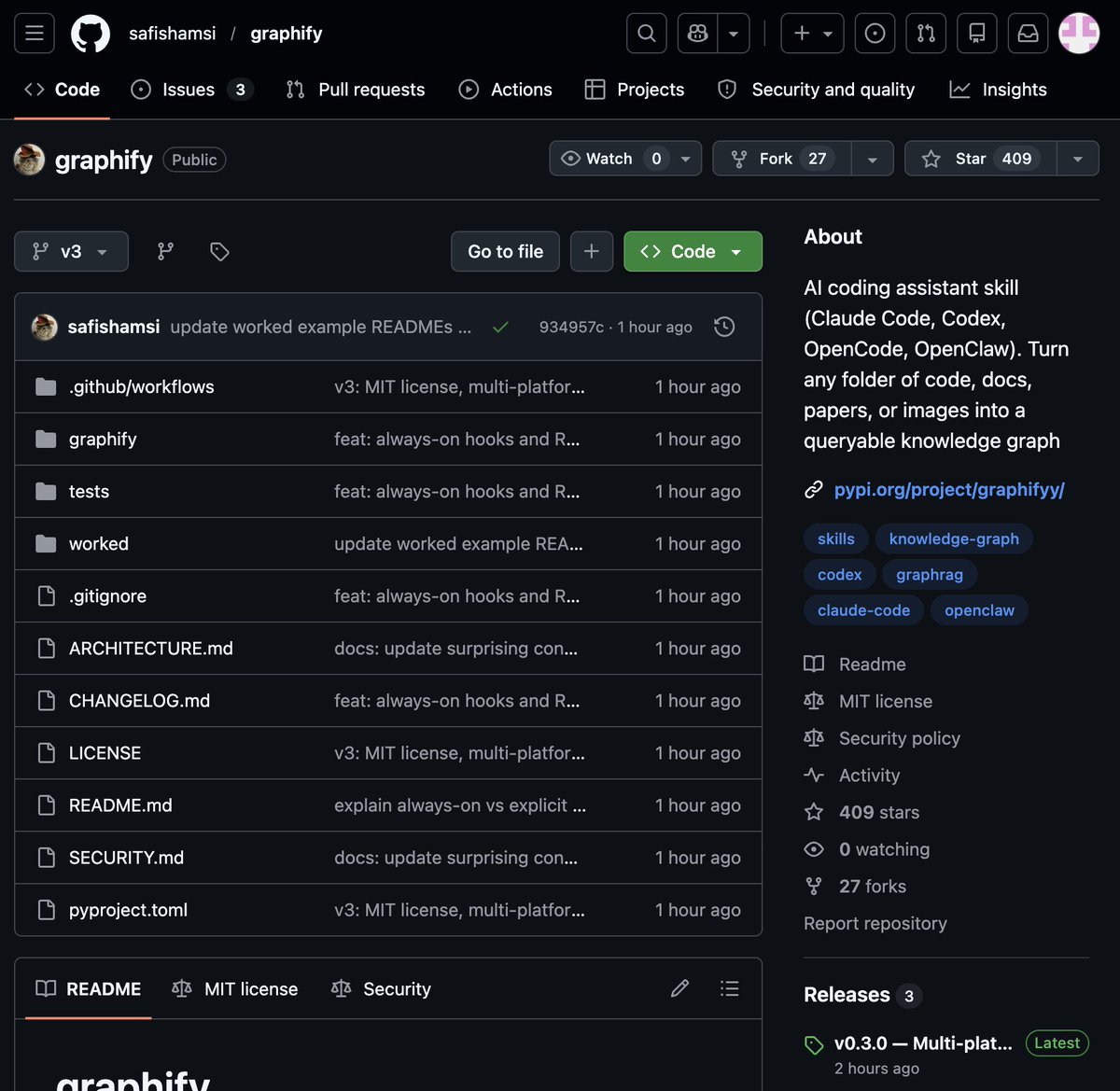

Just 48 hours after Karpathy outlined his workflow, Graphify emerged on GitHub, turning this theoretical framework into a practical tool. Graphify allows users to transform any folder into a navigable knowledge graph with a single command. Its integration with tools like Claude Code means marketers and developers can point it at any folder and instantly gain profound insights .

Key features and benefits of Graphify include:

Navigable Knowledge Graph: A visual and interactive representation of all information within a folder.

Obsidian Vault & Wiki: Automatically generates an Obsidian vault with backlinked articles and a wiki that maps concept clusters.

Plain English Q&A: Enables natural language queries over entire codebases or research folders, answering questions like "What calls this function?" or "What connects these two concepts?"

Unprecedented Token Efficiency: Achieves a remarkable 71.5x fewer tokens per query compared to reading raw files. This isn't just an improvement; it's a paradigm shift in how AI agents can reason over large datasets .

Broad Support: Compatible with code in 13 programming languages, PDFs, images via Claude Vision, and Markdown files.

Implications for MarTech and AI Agencies

For marketing agencies specializing in MarTech and AI, Graphify and the underlying LLM Knowledge Base concept represent a significant leap forward. Imagine:

•Enhanced Content Creation: Rapidly generating comprehensive content briefs, articles, and marketing copy by querying an agency's vast internal knowledge base of past campaigns, client data, and industry research.

•Deeper Customer Insights: Transforming raw customer feedback, market research, and sales data into interconnected knowledge graphs, allowing AI agents to uncover nuanced patterns and insights for hyper-personalized campaigns.

•Optimized Campaign Strategies: Leveraging an AI-maintained wiki of campaign performance data, A/B test results, and competitor analysis to inform and optimize future marketing strategies with unparalleled efficiency.

•Streamlined Internal Knowledge Management: Onboarding new team members faster, democratizing access to institutional knowledge, and ensuring consistency across all client projects by maintaining a self-updating, auditable knowledge base.

This new paradigm for AI agents reasoning over large codebases and information repositories means that marketing agencies can now process and leverage information with an efficiency and depth previously unimaginable. It's about moving beyond simple data retrieval to true knowledge compilation and active maintenance by AI.

The Future is Here

The rapid development of tools like Graphify, directly inspired by leading AI thinkers, underscores the accelerating pace of innovation in AI. For marketing agencies, staying at the forefront of these advancements isn't just an advantage-it's a necessity. Embracing LLM Knowledge Bases means unlocking new levels of efficiency, insight, and strategic capability.

Ready to explore how cutting-edge AI and MarTech can transform your marketing efforts? Contact us today to learn more about leveraging these powerful tools for your business.